On a Friday afternoon in late February 2026, President Donald Trump got on Truth Social and typed something that would shake the AI industry to its core.

“I am directing EVERY Federal Agency in the United States Government to IMMEDIATELY CEASE all use of Anthropic’s technology. We don’t need it, we don’t want it, and will not do business with them again!”

He called Anthropic — the company behind Claude, one of the most powerful AI assistants on the planet — “Leftwing nut jobs.” Defense Secretary Pete Hegseth went a step further, slapping the San Francisco startup with a designation usually reserved for Chinese tech giants and Russian state-owned firms: a supply-chain risk to national security.

Just like that, a $200 million government contract was gone. Anthropic was, on paper, in the same category as Huawei.

Then something nobody saw coming happened.

America opened the App Store and started downloading.

The fight that started it all

To understand how we got here, you have to go back a few months. Anthropic — founded in 2021 by former OpenAI employees, including CEO Dario Amodei — had won a landmark Pentagon contract. Claude was the only AI model cleared for deployment on classified military networks, through a partnership with data analytics company Palantir. Axios It was a massive deal. A signal that Anthropic had arrived.

But there were two conditions Anthropic insisted on. Just two.

Claude would not be used for mass domestic surveillance of Americans. Claude would not be used to make autonomous weapons decisions without human involvement.

That was it. Two lines in the sand.

The Pentagon said it was not interested in such uses and would only deploy the technology in legal ways — but it also insisted on access without any limitations. NPR Their position was simple: once we buy the tool, we decide how it’s used.

Months of private negotiations became public. Then they got ugly.

Pentagon undersecretary Emil Michael called Amodei “a liar” with a “God complex,” accusing the CEO of wanting to “personally control the U.S. military” in posts on X. CNN Hegseth set a hard deadline — 5:01 PM on a Friday — for Anthropic to drop its guardrails or face consequences.

Amodei’s response came 24 hours before that deadline. It did not blink.

“We cannot in good conscience accede to their request. No amount of intimidation or punishment from the Department of War will change our position on mass domestic surveillance or fully autonomous weapons.”

At 5:01 PM, the deadline passed. Within the hour, Trump was on Truth Social. The ban was real.

The plot twist nobody expected

Here’s where the story gets strange.

Hours after the Trump administration’s announcement, OpenAI CEO Sam Altman posted on X that his company had struck a deal with the Defense Department to deploy its models on the department’s classified networks. Fortune

The world waited to see how OpenAI had threaded the needle Anthropic couldn’t.

They hadn’t. Not really.

Altman wrote that two of OpenAI’s most important safety principles are “prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems.” Fortune The exact same two principles Anthropic was banned for insisting on.

Anthropic stood firm. Got labeled a foreign adversary.

OpenAI held the same line. Got a contract.

Retired Air Force General Jack Shanahan, a former leader of the Pentagon’s AI initiatives, wrote that the government “painting a bullseye on Anthropic garners spicy headlines, but everyone loses in the end.” He said Anthropic’s red lines were “reasonable,” and that Claude was already deeply embedded across the military and intelligence community. NPR

Senator Mark Warner, the top Democrat on the Senate Intelligence Committee, said the penalties raised “serious concerns about whether national security decisions are being driven by careful analysis or political considerations.” Federal News Network

But none of that stopped the ban.

The Streisand Effect, AI Edition

Here’s what the administration didn’t account for: the American public was watching.

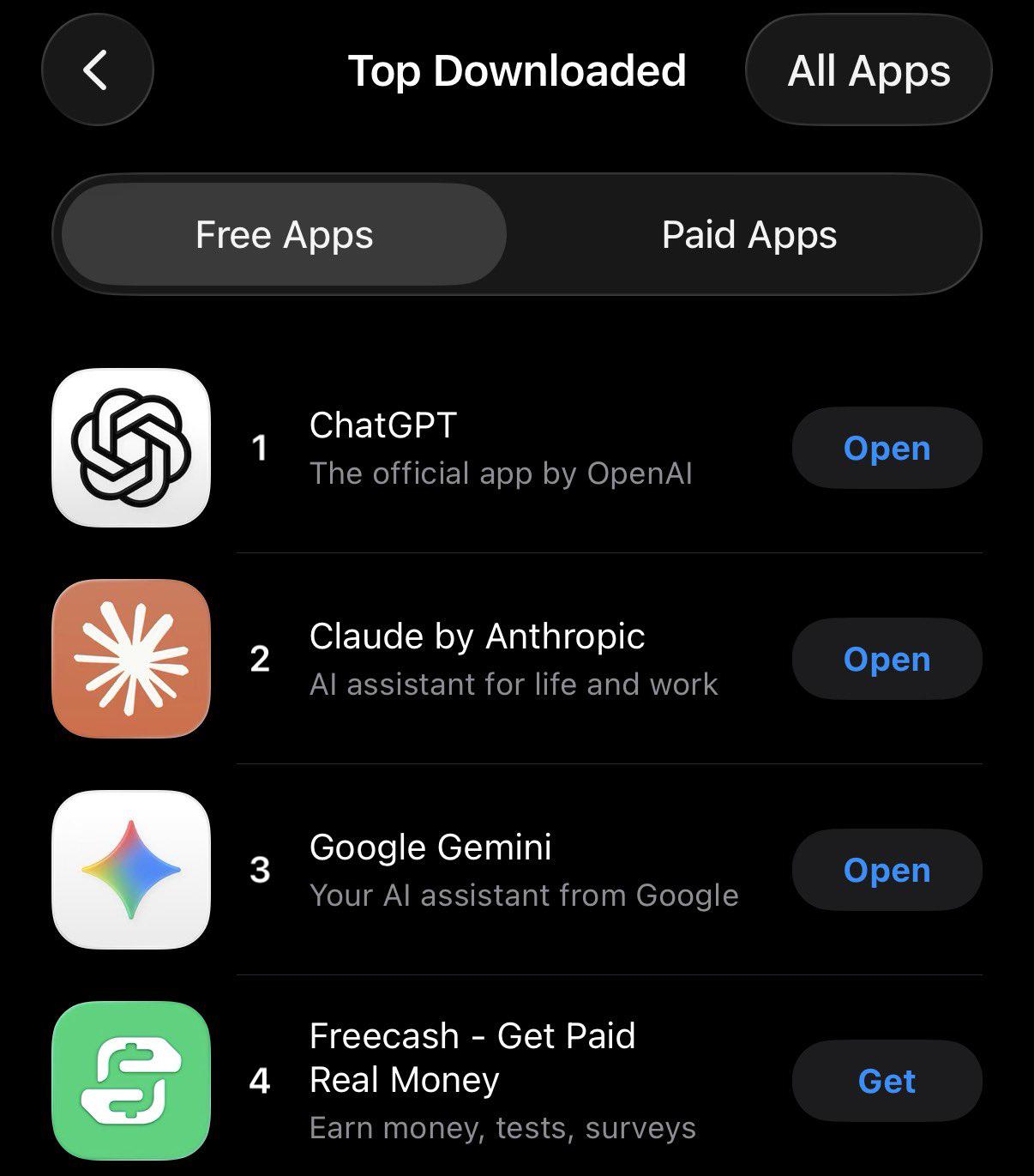

On January 30th, Claude sat at #131 on the Apple App Store. It hovered in the top 20 for much of February. ChatGPT had held the #1 spot for most of the month. Evrim Ağı

Then Friday happened.

Saturday morning, people woke up, read about a company that had refused to let its AI be used to surveil American citizens — and had held that line even when threatened with “major civil and criminal consequences” from the White House.

By Saturday night, Claude had jumped to #1. ChatGPT fell to #2. Google’s Gemini dropped to #4. Evrim Ağı

Not because of a new feature. Not because of a marketing campaign. Because people found out where Anthropic drew its ethical lines — and decided that mattered.

Anthropic’s free user base had grown over 60% since January, with daily sign-ups tripling since November and breaking all-time records every day that week. Paid subscribers had more than doubled in 2026. Evrim Ağı

At the start of the year, Claude was #42. On Saturday night, it was #1.

The backlash that followed

OpenAI’s Pentagon deal didn’t just raise eyebrows. It sparked a movement.

The Instagram account “quitGPT” gained about 10,000 followers following the news. A Reddit post about OpenAI winning the Pentagon contract racked up 30,000 upvotes under the message “Cancel and Delete ChatGPT!!!” Storyboard18

A viral video appeared to show chalk art outside Anthropic’s offices in San Francisco reading “you give us courage.” Storyboard18

People pointed to OpenAI president Greg Brockman’s $25 million donation to a pro-Trump super PAC as part of their reasoning. The optics weren’t great for a company that had just signed a deal with the administration that banned its competitor.

And in a remarkable moment, even Sam Altman weighed in. The supply-chain risk designation against Anthropic, he said publicly, was “a very bad decision” — and he hoped it would be reversed.

What this actually means

Let’s be honest about the full picture. ChatGPT is still right behind Claude on the app store charts and has a first-mover advantage. ChatGPT now counts over 900 million weekly users. Storyboard18 This isn’t a death blow to OpenAI.

But something unusual happened this week. Something that doesn’t often happen in Silicon Valley, where companies routinely bend to government pressure, soften their stances, and quietly give in when the money gets big enough.

A company was given a choice between a $200 million contract and its principles. It chose its principles — publicly, loudly, and without hedging. And then the people who use these tools every day noticed.

Whether Anthropic wins its legal fight against the supply-chain designation remains to be seen. Whether the ban gets reversed, the politics shift, or this moment fades into the next news cycle — all unknown.

What isn’t unknown is this: for one extraordinary weekend in late February 2026, the most downloaded app in America wasn’t the one backed by a government contract.

It was the one that told the government no.