Last Tuesday, Dario Amodei walked into the Pentagon and sat across from Defense Secretary Pete Hegseth. By Friday afternoon, his company had been blacklisted by the President of the United States.

The issue wasn’t money, and it wasn’t incompetence. It was two sentences buried in a contract — a contract that Anthropic itself had pursued aggressively for over a year.

Anthropic’s Claude was the first commercial AI model ever deployed on the U.S. military’s classified networks, part of a deal worth up to $200 million signed last summer through a partnership with Palantir and Amazon Web Services. The company was proud of it. But inside the contract were two hard limits that Anthropic had insisted on from the start: Claude could not be used for mass domestic surveillance of Americans, and it could not power fully autonomous weapons — systems that choose and kill targets without a human making the final call.

Those conditions had, by Anthropic’s own account and by the accounts of defense analysts who spoke to Bloomberg, never once slowed down actual military operations. Nobody had bumped into them. The Pentagon itself, when pressed, said it had no current plans to use AI for domestic surveillance or autonomous lethal targeting.

And yet, those two sentences had become, in the words of Anthropic CEO Dario Amodei, the crux of months of increasingly hostile negotiations.

The Tuesday meeting was blunt. Hegseth gave Amodei a deadline: remove the restrictions, allow Claude to be used for “all lawful purposes” — or face consequences. The deadline was 5:01 p.m. on Friday, February 28th.

The threats were serious. The Pentagon floated invoking the Defense Production Act, a Korean War-era law that gives the president emergency authority to commandeer domestic industries. More concretely, it threatened to designate Anthropic a “supply chain risk” — a classification normally reserved for foreign companies suspected of espionage or sabotage. Companies like Huawei. The idea of applying it to a San Francisco AI startup founded by former OpenAI researchers would have been laughable in any other news cycle.

The Pentagon’s spokesperson Sean Parnell was not subtle about the administration’s position: “We will not let ANY company dictate the terms regarding how we make operational decisions.”

Overnight Wednesday, the Pentagon sent Anthropic new contract language, framed as a compromise. Anthropic’s legal team read it and concluded it changed essentially nothing — that the new language “was paired with legalese that would allow those safeguards to be disregarded at will.”

Thursday evening, Amodei posted his response publicly. He acknowledged, carefully and without ambiguity, that the Pentagon makes military decisions — not private companies. But he drew a line anyway. Domestic mass surveillance and fully autonomous weapons are uses “simply outside the bounds of what today’s technology can safely and reliably do,” Yahoo! he wrote. Adding them to a contract wasn’t a formality. It was a substantive ask. One Anthropic wasn’t willing to meet.

“The Pentagon’s threats do not change our position: we cannot in good conscience accede to their request.”

Friday afternoon, Trump posted on Truth Social ordering all federal agencies to immediately halt use of Anthropic’s products. Hegseth signed the supply chain risk designation. The practical effect: any company with Pentagon contracts would need to prove it had no relationship with Anthropic a designation that could quietly unravel Anthropic’s enterprise business, which depends heavily on large corporations, many of whom also work with the federal government.

The $200 million contract itself wouldn’t threaten Anthropic’s survival the company is valued at roughly $380 billion. The designation is another matter.

Law professors and legal analysts who spoke to CNN noted that the supply chain risk designation has specific procedural requirements a formal risk assessment, Congressional notification and that there was no evidence either had been completed. Anthropic said it would challenge the ruling in court.

Emil Michael, the Pentagon’s Undersecretary for Research and Engineering who had been in the negotiations, posted on X that Amodei “is a liar and has a God-complex” and wanted to personally control the U.S. military.

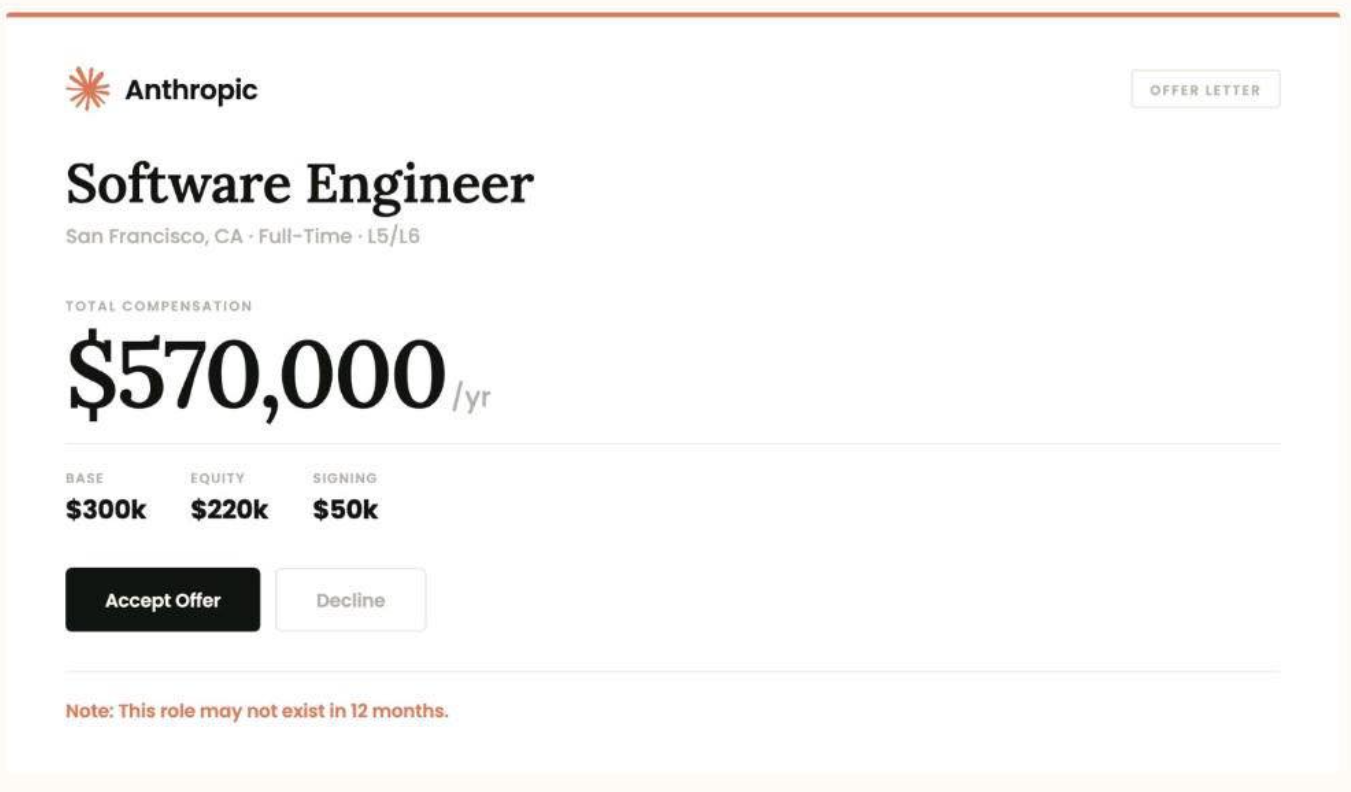

A few hours later, Sam Altman announced on X that OpenAI had struck a new deal with the Pentagon to deploy its models on classified networks.

The conditions OpenAI agreed to: no mass domestic surveillance. Human oversight required for decisions involving lethal force. No fully autonomous weapons without a person in the loop.

The same two conditions Anthropic was blacklisted for refusing to remove.

Altman had, earlier in the week, publicly said he supported Anthropic’s position. He told CNBC that OpenAI holds the same red lines, and that working with the military was appropriate “as long as it is going to comply with legal protections.”

A few hours later, Sam Altman announced on X that OpenAI had struck a new deal with the Pentagon to deploy its models on classified networks.

The conditions OpenAI agreed to: no mass domestic surveillance. Human oversight required for decisions involving lethal force. No fully autonomous weapons without a person in the loop.

The same two conditions Anthropic was blacklisted for refusing to remove.

Altman had, earlier in the week, publicly said he supported Anthropic’s position. He told CNBC that OpenAI holds the same red lines, and that working with the military was appropriate “as long as it is going to comply with legal protections.”